AI as Normal Technology: Investing Beyond the Illusion of Magic

General-purpose technologies diffuse slowly and unevenly. AI will follow similar patterns. Value creation depends on when bottlenecks collapse, not benchmark scores.

AI as Normal Technology: Investing Beyond the Illusion of Magic

Executive Summary

General-purpose technologies rarely change the world overnight. Electricity, cars, the internet, biotech, all diffused slowly, unevenly, and only after infrastructure was built, and institutions adjusted. AI will be no different in kind, though likely faster in tempo. What matters is not the brilliance of each new demo, but when bottlenecks collapse.

The Frame

AI progress is cumulative. Transformers, scaling laws, retrieval, chain-of-thought, tool use, these were iterations, not ruptures, built on decades of research. Hype presents them as leaps toward AGI, but history suggests a more sober lens: adoption is shaped by economics, integration, and trust, not by benchmark scores.

This reframing matters for venture capital. Value does not accrue to mere capability. It accrues where adoption frictions disappear, making compute accessible, data usable, models reliable, workflows interoperable, and compliance manageable. The hype focus on capability; the constraint remains usability and trust.

The Bottlenecks

Adoption today is slowed by structural frictions no less real than grids for electricity or broadband for the internet:

- Compute & power: affordable, predictable access, including energy supply and network locality, is essential for deployment at scale.

- Data readiness: poor quality, silos, and compliance restrictions make enterprise integration fragile. At the frontier, training data scarcity looms; synthetic data offers potential but raises risks of compounding errors and credibility loss.

- Reliability & evaluation: benchmark gains rarely translate into production reliability. Enterprises need predictable error profiles, credible reporting, and procurement-grade evaluation standards.

- Talent & organisational change: most firms lack the engineers to integrate AI reliably, the product talent to embed it into workflows, and leadership willing to take strategic bets under uncertainty.

- Interoperability: integration remains artisanal. Protocols like the Model Context Protocol (MCP) could standardise connections, but consolidation has not yet arrived.

- Cost predictability: token pricing, latency, and egress fees remain opaque, slowing CFO approval for scaled deployment.

- Compliance & regulation: liability and explainability shape procurement confidence. The EU AI Act and sectoral regulators already define tempo.

- Security: prompt injection, data exfiltration, and supply-chain risks make large-scale deployments risky without hardened defences.

- Sectoral unevenness: creative and consumer sectors tolerate error and move fast; healthcare, finance, and defence demand explainability, provenance, and accountability.

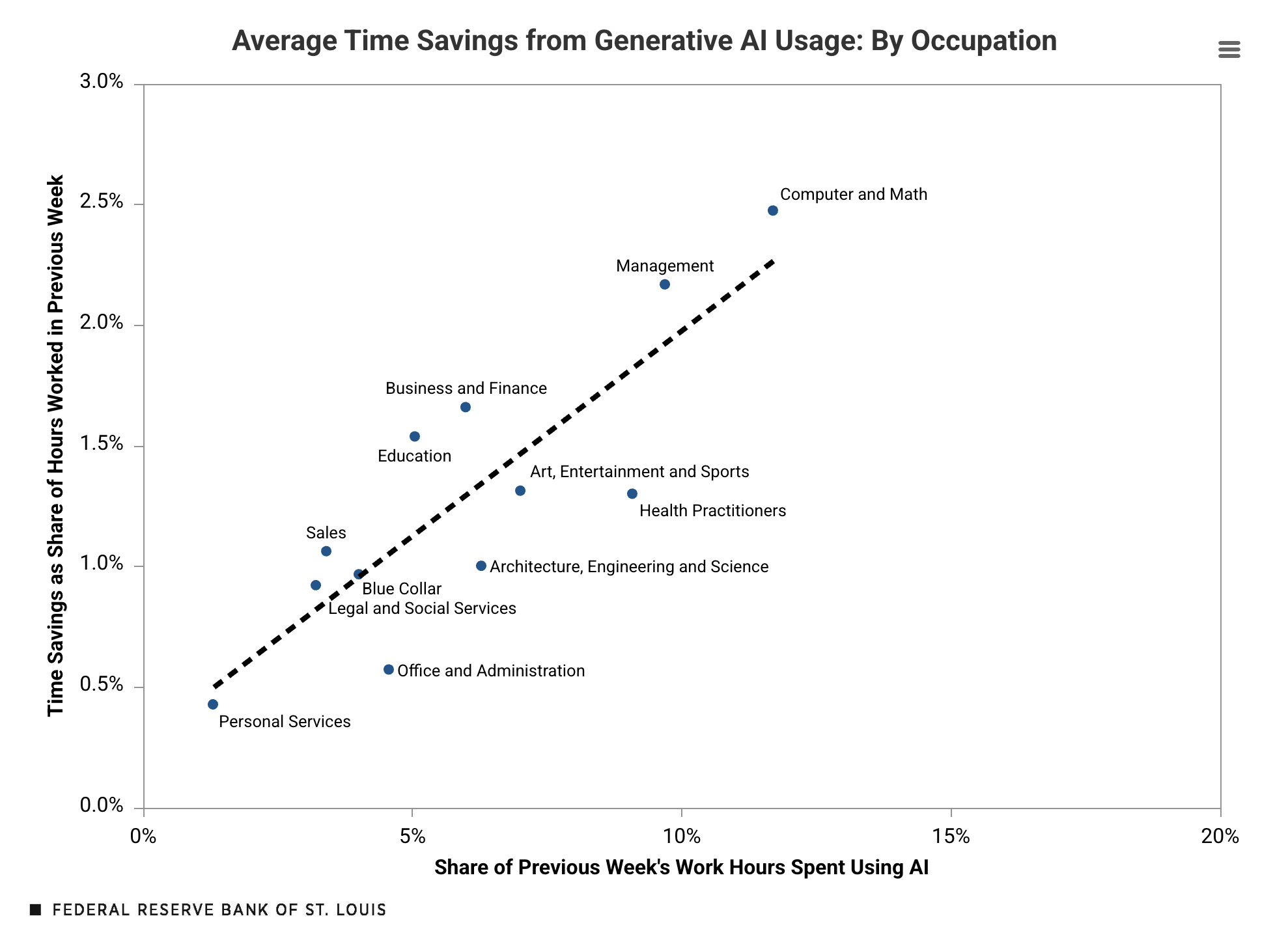

Surveys show adoption is rising, far higher today than the 0.5–3.5% of work hours recorded in early 2024, but usage still lags behind the hype cycle. Experimentation remains broader than integration

Hype vs Reality

- GPT-5: marketed as rupture, delivered UX refinements. Adoption still gated by trust, compliance, and predictable reliability.

- Reasoning models: strong on benchmarks, fragile and costly in practice. Latency in seconds, not milliseconds, makes them unusable for most workflows.

- Agents & MCP: pitched as co-workers, but still falling short. MCP hints at a future of seamless interoperability, but reliability and security must follow.

Across these cases, performance also matters: bigger context windows, faster latency, reduced error rates. These are not cosmetic improvements; they are adoption enablers.

Implications for Venture Capital

Durable value will accrue where bottlenecks collapse:

- Infrastructure rails: compute, data pipelines, observability, guardrails.

- Performance thresholds: adoption unlocks when latency, reliability, and error rates hit enterprise-grade standards.

- Interoperability standards: protocols may consolidate into non-negotiable rails, or remain fragmented with orchestration layers dominating.

But identifying where value will accrue is not enough. Investing under uncertainty requires discipline:

- Surfacing user frictions: reference calls and ground truth matter more than benchmarks. The question is what users actually fear or prioritise (integration cost, liability, vendor lock-in, resourcing, etc.). Users can’t always describe the transformative solution, but they reveal what adoption depends on.

- Timing entries: bottlenecks don’t erode gradually, they tend to collapse. Capital must enter just before tipping points, early enough to ride diffusion, not so early that it sits stranded in speculative capacity.

- Balancing across strategies: portfolios should spread exposure across: (i) large Foundation Models (FMs) that demand capital and patience, (ii) vertical FMs with proprietary data moats, (iii) small efficient models driving accessibility, and (iv) applications that collapse immediate, visible bottlenecks (“the Lovables”). Each has different risk-return dynamics; balance, not concentration, is the hedge against uneven adoption.

- Protocol risk management: standards can create monopolistic winners or stranded assets.

In short: the edge lies not in betting on “intelligence” itself, but in judging which teams are positioned to collapse frictions, time inflections, and convert adoption into lasting advantage.

The Real Work Ahead

AI’s trajectory will not be decided by the next benchmark or demo. It will be decided by the collapse of bottlenecks, the trust of institutions, and the resilience of deployment. For investors, policymakers, and societies alike, the frontier is not capability, it is adoption.

Full Article

Every new model release in AI arrives wrapped in a narrative of rupture.

OpenAI initially withheld GPT-2, citing potential misuse. Their blog noted in 2019: ”Due to our concerns about malicious applications of the technology, we are not releasing the trained model.” GPT-4 was framed as emergent intelligence surpassing human comprehension, while GPT-5 was speculated to be a civilisation-altering force.

The same storyline repeats with each frontier: agents presented as autonomous co-workers, multimodality as a step toward human-like perception, and benchmark gains on tasks like GSM8K or Big-Bench Hard promoted as proof that models have finally learned to reason. Each wave is sold as the decisive step toward artificial general intelligence (AGI).

The reality, however, is more sober. These systems are extraordinary, but they advance through increments, not discontinuities.

Like electricity or the internet (and even if it does not feel like it), AI is a normal technology, in the sense proposed by Arvind Narayanan & Sayash Kapoor, in their eponymous essay (2024) and on their follow-up piece (2025). Indeed, AI follows recognisable patterns of invention, diffusion, institutional adaptation, and economic integration (just perhaps on a faster clock). This interpretation rests on the assumption that Recursive Self-Improvement will not yield superintelligence. For an alternative view, see the counter-narrative.

It does not diminish AI’s importance. It reframes it: progress is cumulative, diffusion is gradual, and value creation follows patterns that are observable in other technological waves.

For venture capital, this shift in framing matters: if AI is a normal technology, then the real frontier is not (just) raw capability, but adoption. It is not about anticipating miracles, but about understanding when and how adoption bottlenecks (will) collapse, and positioning capital accordingly.

I. From Celebrated Breakthroughs to Diffusive Realities

The “AI as Normal Technology” perspective rests on three propositions:

-

Description: AI progress is cumulative. What appear as breakthroughs are in fact steps built on decades of scaffolding. The latest deep learning boom itself traces back to ImageNet in 2012, which showed the power of GPUs and large datasets. Transformers followed in 2017, offered a general-purpose architecture. Scaling laws were formalised in 2020. GPT-3 in that same year was less a rupture than the logical next step: 175B parameters trained under those laws. Retrieval-augmented generation (2020), chain-of-thought prompting (2022), tool use (2023) are the not rupture but iterations. All are the grounded in almost a century of accumulated research in natural language processing, machine learning and compute hardware. Each leap is a refinement of the architectures, an expansion of datasets, or simplification of interfaces and workflows. Progress is best understood as compounding increments, not singular ruptures.

-

Prediction: AI’s broad societal impact will unfold over decades Diffusion is rarely immediate. Everett Rogers described S-curves of adoption: innovators, early adopters, early majority, late majority, laggards. Carlota Perez framed technological waves as installation phases (speculative over-investment) followed by deployment phases (broad integration). Electricity took over 40 years to reach 80% of U.S. households. Automobiles required roads, better tires, fueling infrastructure and traffic codes before mass adoption. The internet needed broadband and secure payments. Biotech breakthroughs like CRISPR (discovered in 2012) required over a decade to move from discovery to therapeutic trials - yet its rapid deployment during COVID-19, powering diagnostics and accelerating vaccine R&D, also showed how institutional readiness can suddenly unlock adoption at scale). AI will (probably) not be different in kind, though perhaps faster in tempo: adoption will proceed sector by sector, gated by unit economics, reliability, workflow integration, and regulatory trust. In this sense, AI diffusion is normal: predictable in shape, if not in speed.

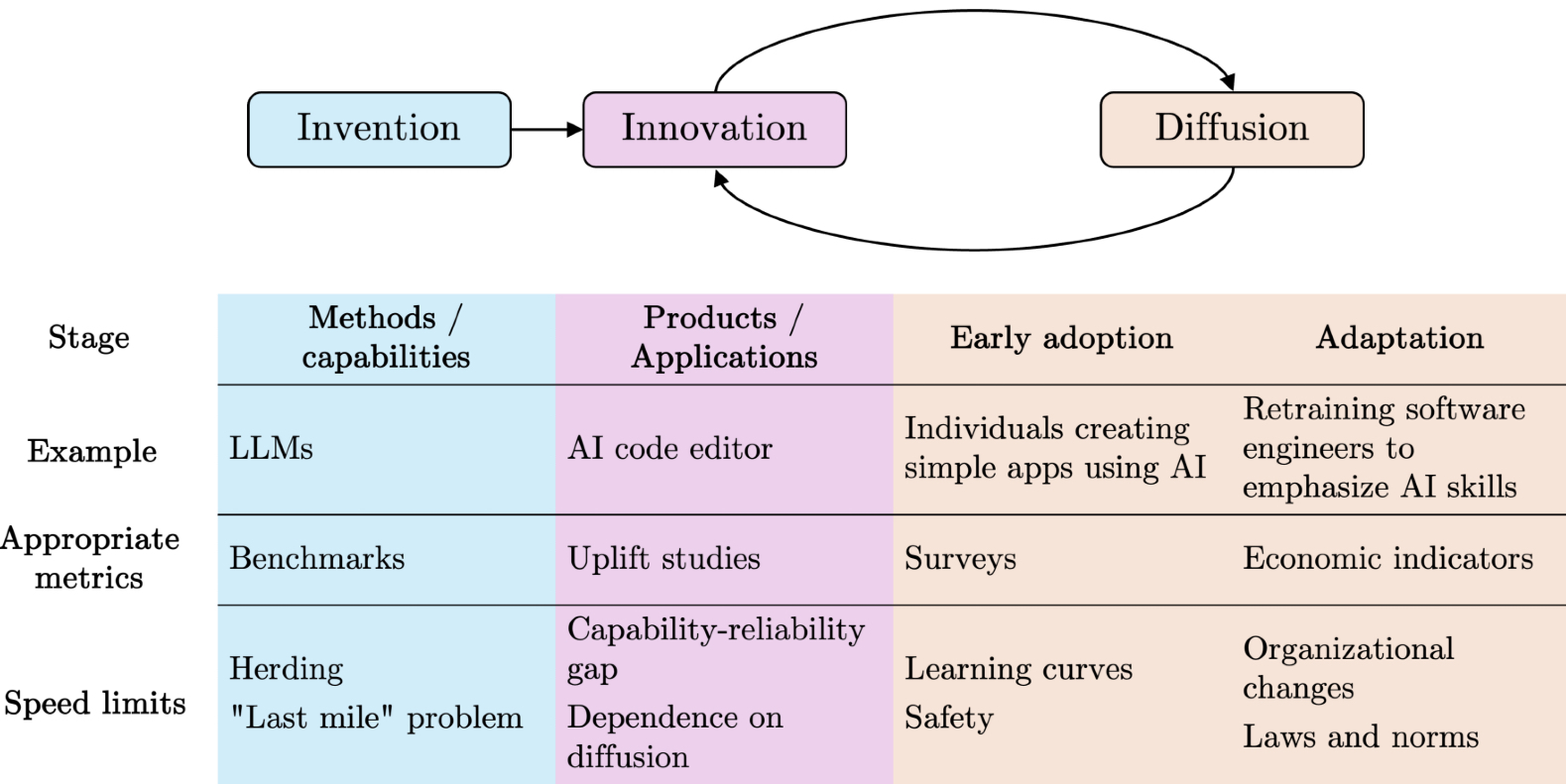

Like other general-purpose technologies, the impact of AI is materialised not when methods and capabilities improve, but when those improvements are translated into applications and are diffused through productive sectors of the economy.

- Prescription: policies and investments should focus on adoption.

Rather than fixating on speculative endgames (whether utopian transcendence or catastrophic collapse) the real work lies in building the conditions that make adoption both possible and sustainable. Adoption depends on infrastructure, governance, and incentives.

Three dimensions matter in particular:

Enabling adoption: this means lowering the practical barriers that prevent organisations from integrating AI: standardised evaluation frameworks that reduce buyer uncertainty, interoperability protocols that connect models to enterprise data, robust data pipelines, governed, documented and secured. Without these enablers, adoption remains confined to pilots and proofs of concept.

- Managing risks: as systems enter workflows, new risks emerge: bias amplified in hiring, hallucinations in healthcare, cascading errors in supply chains. These are not abstract scenarios but concrete adoption risks. They must be managed through monitoring, liability frameworks, and safety protocols. The lesson from previous waves, aviation, cars, pharmaceuticals, finance is that regulation matters, but regulation and risk management co-evolve with adoption, it does not precede it.

- Ensuring resilience: no technology diffuses without shocks. Electrical grids suffered blackouts, the internet endured worms and crashes, biotech faced trial halts. Resilience means creating institutions and redundancies that absorb these shocks without derailing long-term deployment. For AI, that includes stress-testing models, ensuring diversity of suppliers, and building legal frameworks that can adapt as capabilities evolve. Seen this way, the path to impact is not about speculative AGI moments. It is about whether adoption enablers, risk management, and resilience mechanisms are in place. This is where capital allocation (and policy choices) have leverage: they shape the tempo of diffusion.

This framing resists technological determinism. AI does not reconfigure society on its own. Institutions, regulations, cultural practices, and infrastructures mediate its effects. The technology sets possibilities; society through institutions and cultural practices, decides pathways.

For investors, this means hypes are dangerous. Capital should not be allocated based on the illusion of miracles, but on the structural drivers that allows diffusion. Diffusion bottlenecks abound for every technological wave and history is blunt: electricity required grids, the internet needed broadband and payment rails, and AI will require affordable compute, evaluation standards, model reliability, institutional trust. Those who invest in these enablers will shape the deployment phase, and capture its value.

II. Diffusion and the Adoption Bottleneck

AI today sits at the juncture between installation and deployment, to use Carlota Perez’s terms. Installation has been (is?) spectacular: billions poured into GPU clusters, training runs at astronomical cost, valuations inflated by AGI promises. But this phase, for all its capital intensity, is largely speculative capacity. Deployment, embedding AI into workflows, institutions, and economic routines, is only beginning.

But technologies rarely fail because they don’t work in labs. They fail because they don’t diffuse. For AI, diffusion is slowed by structural frictions that are no less real than grids for electricity or broadband for the internet.

Among the most critical today:

- Power and compute capacity: access to GPUs is only part of the story: power grids, data-center locality and network latency also determine where and how AI can run. Surging demand strains electricity supply in key regions, while geographic concentration raises resilience and sovereignty concerns.

- Data readiness: most enterprise AI projects fail not because of models, but because of inputs. Poor quality data, fragmented silos, and compliance restrictions make integration fragile. The bottleneck extends beyond enterprise integration: at the frontier, access to high-quality training corpora is drying up. Synthetic data is often proposed as a solution, but it introduces its own challenges: compounding model errors, homogenisation, and questions of credibility.

- Reliability and evaluation: benchmark competence rarely translates to production reliability. Enterprises need predictable error profiles and standardised evaluations. HAL, the Foundation Model Transparency Index and similar initiatives are steps in this direction, but adoption stalls without credible reporting and procurement-grade metrics.

- Talent: adoption is not only technical but human. Many firms lack the engineers to build reliable integrations (even less to adapt models to their own data), product talents to translate capabilities into workflows, and the risk expertise to evaluate exposure. At the leadership level, the challenge is not ignorance but the difficulty of making high-stakes decisions in such a fast-moving environment. This dual shortage, skilled practitioners on the ground and sponsors able to steer strategy at the top, makes adoption difficult.

- Integration and interoperability: connecting models to workflows is still artisanal. Emerging protocols such as the Model Context Protocol (MCP) hint at a future where agents plug into data and tools as easily as USB-C connects hardware. Until such standards mature, integration costs are prohibitive.

- Cost predictability: inference prices have dropped, but economics remain opaque: token pricing, context windows, egress fees, and latency SLAs are shifting variables. CFOs hesitate to approve scale deployments without predictable unit economics.

- Regulation and compliance: liability, explainability, and regulatory clarity act as gatekeepers. The EU AI Act will phase in obligations through 2026-2027, but uncertainty already slows procurement. Without confidence that deployments are defensible to regulators and boards, adoption stalls.

- Security: AI systems open new attack surfaces: prompt injection, data exfiltration, model poisoning, and supply-chain vulnerabilities. Enterprises cannot deploy at scale without guarantees that these risks are understood and mitigated.

- Sectoral constraints: diffusion does not advance uniformly. Creative and consumer sectors move quickly, where tolerance for error is higher. Regulated domains like healthcare, finance, and defense move slowly, demanding explainability, provenance, and legal accountability before adoption can scale.

These frictions explain why adoption remains shallow despite hype. Earlier studies in 2024 suggested that only 0.5-3.5% of work hours were assisted by generative AI overall. More recent evidence points to higher usage among active users (6-25% of work hours in the past week for workers who have used AI recently). For many organisations, experimentation continues to outpace meaningful integration.

For venture capital, these bottlenecks are where value concentrates. The decisive question is no longer “what can the model do in principle?” but “what workflows are being done differently, at scale, with positive unit economics?” The startups that will define the deployment era are those that collapse the frictions holding adoption back.

But before turning to the implications for venture capital, it is worth examining a few recent breakthrough announcements through this lens. Each was framed as magic, yet adoption remains the true judge of impact.

III. Illustrations: Hype vs Reality in Practice

The bottlenecks slowing AI diffusion show up clearly when we examine the technologies that have captured headlines over the past year. Each case demonstrates the gap between capability hype and adoption reality.

GPT-5 The run-up to GPT-5 was framed in familiar terms: a decisive break toward artificial general intelligence. The reality was more mundane but no less important. Improvements came in ergonomics: smoother routing between models, better multimodality, better UX. For enterprises, these refinements matter, but they do not collapse the adoption bottlenecks of compliance, reliability or cost predictability.

Inference costs are indeed falling, especially on older, commoditised models. But organisations are reluctant to default to cheaper if it introduces reliability risk. In production, ROI is determined less by cost per token than by whether a system behaves consistently (or feels it is under control, because small deviations does not really matter in the end, what you need is really to avoids catastrophic errors), and can be defended in front of regulators or customers. Reliability and evaluation are the filters that decide which models are usable.

GPT-5 thus illustrates a recurring pattern: benchmark progress can look like rupture in marketing but translate into incremental gains in deployment. Costs may fall, but unless reliability and evaluation frameworks give buyers confidence, adoption will remain gated. The hype focus on capability; the constraint remains trust.

Thinking models

When labs began releasing ‘reasoning‘ models, benchmark results on datasets like GSM8K or Big-Bench Hard were sold as proof that models could now ‘think‘. The framing was that reasoning had been unlocked, an evident rupture. In practice, adoption data would tell a different story: in production, less than 1% of workflows rely on these models.

The bottlenecks are concrete. These systems are slower and more resource-intensive, with latency measured in seconds or minutes rather than milliseconds, too slow for the majority of customer-facing products or real-time enterprise workflows. They are also costly, especially when scaled, and brittle outside the narrow distributions of test benchmarks. Organisations hesitate to redesign processes around models whose responsiveness and reliability cannot be guaranteed.

Here, the constraint is not capability but adoption depth. The models can pass reasoning benchmarks, but without predictable latency, manageable costs, and integration into enterprise workflows, they remain impractical. Until firms can absorb the reliability tradeoffs and redesign processes around them, reasoning models will stay confined to the margins of production.

Agents and the Model Context Protocol (MCP) Agents have been marketed as autonomous co-workers capable of handling complex tasks end-to-end: booking travel, reconciling spreadsheets, drafting code, even negotiating across systems. The narrative suggests a decisive shift toward AI as a labor substitute (at the very least as ‘co-pilotes‘). In practice, most agents remain prototypes, unable to survive beyond controlled demos. Integration is costly, because every deployment requires bespoke pipelines. Latency and cost there too compound the problem: multi-step agent workflows often take minutes and dollars to resolve, making them unusable in similar customer-facing or real-time environments. Reliability is another hurdle: without guardrails, agents loop, stall, or misuse tools. And security teams balk at the new attack surfaces agents introduce, prompt injection, exfiltration, or unintended tool actions.

The emerging Model Context Protocol is significant because it addresses one of these frictions directly: interoperability. MCP standardises how agents discover tools and access data, promising to collapse today’s artisanal integration work. If adopted by OS and platforms, it could trigger an inflection, much like TCP/IP or USB did in earlier waves. But even with such a protocol in place, adoption will hinge on whether reliability, latency, and security are tamed.

Here, the bottleneck is not the capability of agents, but the absence of robust infrastructure. Without standards, better security, predictable performance, and trustable evaluation, agents cannot scale in usage. MCP may be the keystone, but it is only part of the structure that must be built to enable massive diffusion.

Taken together, these cases reveal a common truth: hype tends to narrate breakthroughs as ruptures, but adoption is slowed by reliability, cost, integration, and trust. Progress may look spectacular in benchmarks, but without collapsing bottlenecks, workflows will remain largely unchanged. The constraint is not what models can do in principle, but what institutions and organisations can reliably absorb in practice.

IV. Implications for Venture Capital

The lesson from these cases is clear: capabilities advance quickly, but adoption is gated by reliability, cost, integration, and trust. For venture investors, this distinction matters. Benchmarks and demos attract attention, but they rarely signal where durable value will accrue. The real opportunities lie in collapsing the bottlenecks that prevent workflows from changing at scale.

That does not mean performance is irrelevant. On the contrary, certain improvements in core model capability are precisely what unlock adoption: bigger context windows enable long-form document analysis; better handling of structured and tabular data makes enterprise integration viable; lower latency makes customer-facing agents practical; smaller error envelopes make deployment defensible in healthcare or finance. Performance gains matter when they close the gap between what models could do in theory and what organisations can trust in practice.

The sharper investment question, then, is not “who has the best model?” but “which capabilities directly collapse adoption bottlenecks, and who is positioned to deliver them?”

1. Where Value Concentrates: Collapsing Bottlenecks

The shift from installation to deployment depends on resolving a few evident bottlenecks: infrastructure, performance, and interoperability are not optional optimisations. They are the conditions that must be in place for generative AI to diffuse at scale.

Infrastructure rails

The most durable value lies in the rails that make adoption possible. This includes:

- data infrastructure: pipelines for collection, cleaning, labeling, governance, and compliance. This is the substrate on which every other layer depends.

- retrieval infrastructure: vector databases and hybrid search systems that ground outputs in enterprise knowledge and enable context-rich applications;

- inference orchestration: layers that optimise routing, batching, caching, and cost-performance tradeoffs across models, increasingly critical as enterprises mix providers and models;

- observability and evaluation: platforms that evolve into the equivalent of crash tests and safety certifications, monitoring reliability, bias, and drift in production;

- guardrails and safety layers: systems for policy enforcement, filtering, red-teaming, and attack resistance (prompt injection, exfiltration). These are rapidly moving from “nice-to-have” to procurement requirements.

Performance thresholds As mentioned earlier, certain frontier advances will matter precisely because they collapse bottlenecks, it’s not performance vs. adoption. Context window expansion, structured data reasoning, latency reduction, and error-rate compression are not “nice-to-haves”: they determine whether new classes of workflows can be trusted to AI. The firms able to deliver these specific performance gains, whether at the model layer or through system-level optimisations, will definitely capture adoption tailwinds.

Interoperability standards Adoption lags when every workflow requires bespoke integration. The next wave of value could come from the protocols and standards that make models, agents, and enterprise systems interoperate seamlessly. Whether through emerging frameworks like MCP, or new layers for identity and permissions, consolidation will define where value accrues. If a standard becomes universal, its owners capture rail-like economics, much like TCP/IP or USB. If fragmentation persists, orchestration platforms that bridge multiple agents and APIs may dominate, but way more commoditised. The strategic uncertainty is not whether interoperability matters, but which layer crystallises into the standard.

2. How to invest under uncertainty

For venture investors, the challenge is not simply identifying capable teams or impressive demos. It is deciding, under conditions of uncertainty, which companies are positioned to benefit when adoption bottlenecks collapse, and which will be doomed when they do not. That requires methods to surface real user constraints, frameworks to interpret technical strategies, and portfolio balance to hedge against uneven diffusion.

Revealing user beliefs and frictions. Adoption is governed less by abstract benchmarks than by the beliefs and constraints of real users. VCs have a unique role in uncovering these hidden drivers. Reference calls reveal what decision-makers and practitioners actually weigh when evaluating AI: risk tolerance, liability exposure, dependency on third-party providers, resource availability, alternatives they have tried (or failed with), and how high a priority the problem really is compared to competing demands. But these signals must be interpreted with imagination, because people rarely articulate what would be transformative in domains they have never experienced. Pre-iPhone mobile users asked for better keyboards, not multi-touch screens. The investor’s job is to read both what customers say (grounded reference work) and what they cannot yet envision, mapping today’s frictions alongside the adjacencies that could unlock tomorrow’s adoption.

Timing risk: Bottlenecks rarely erode gradually; they collapse once a tipping point is reached, triggering rapid diffusion. Compute affordability can flip overnight if new chips or optimisation techniques make inference cheap enough for mass-market use. Interoperability can inflect if a protocol like MCP is adopted by operating systems or cloud providers. Performance breakthroughs (sub-second latency, 99.99% reliability on critical tasks, etc.) can turn fragile demos into must-have enterprise workflows. For venture investors, the discipline is entering just before these inflections: early enough to ride the adoption curve, but not so early that capital sits stranded in speculative capacity. History is blunt on this point, grids, broadband, and payment rails all created sudden tipping points. The art lies in distinguishing hype from genuine signals of imminent collapse, and in paying for entry at a level the upside can still justify.

Performance vs efficiency spectrum.

AI development is not a single race but a spectrum of strategies:

- Large generalist foundation models push capability forward, often at massive CAPEX, unlocking entirely new classes of use cases when breakthroughs occur (context length, multimodality, reasoning).

- Vertical Foundation Models (a sweet spot of mine) concentrate on domains like finance, radiology, plant biology, human biology, or on specific modalities such as tabular data, speech or audio. They trade breadth for smaller training footprint and defensibility through often proprietary data.

- Small and efficient models: (distilled, quantized, or edge-deployed) broaden adoption by cutting inference costs and latency. They enable applications in resource-constrained settings and drive adoption downstream.

- Applications built on third-party models avoid model development entirely, focusing instead on building robust pipelines and intuitive workflows. Their edge lies in usability and integration, not raw model capability, but they can scale explosively when they directly collapse a visible bottleneck (“the Lovables”).

Each strategy has different risk-return dynamics and interacts differently with adoption bottlenecks. Large Foundation Models (FMs) require massive capital and patience, and unlock new workflows. Verticals FMs thrive on defensibility and efficiency. Small models drive accessibility, but can get sidelined by FMs. Applications move fast and capture usability, but are fragile, and depend on timing, superiority vs. existing incumbent and workflow fit. For investors, the art is balancing exposure across this spectrum rather than betting on a single archetype. [Note: Infra and middleware (safety, orchestration, deployment) sit between full builders and applications, shaping models without owning them. They can be read as partial-control positions along this spectrum.]

Protocol risk.

The history of technology is written by standards. Some consolidate into global rails (TCP/IP, USB), others fragment indefinitely (messaging apps), and some coexist uneasily (cloud APIs). The same dynamic is playing out in AI, whether with agent protocols like MCP, model interfaces, or evaluation frameworks. Betting on protocols is high-variance: they can produce monopolistic winners or collapse completely.

Balance in portfolio construction. Adoption will be uneven across industries and layers of the stack. Resilient portfolios balance across: very early bets and companies with traction; hard-tech infra and usable AI apps; standards plays and standalone workflow tools; fast-moving industries, regulated domains like healthcare or finance. The goal is not to predict one perfect winner, but to allocate across multiple S-curves, so that when bottlenecks collapse in one arena, the portfolio is already positioned to benefit.

The Real Work Ahead

AI is not a rupture. It is a normal technology advancing through increments, diffusing through institutions, and gated by bottlenecks. Progress is cumulative.

The mistake is to confuse marketing for inevitability. Every new model has been framed as the threshold of AGI. Every new frontier, agents, multimodality, reasoning benchmarks, has been cast as decisive. Yet adoption lags, bottlenecks endure, and workflows change more slowly than the hype cycle suggests.

This reframing matters. It matters for venture capital, where the durable firms of this cycle will not be those chasing leaderboard gains, but those that collapse bottlenecks, making compute more accessible, data more usable, models more reliable, workflows more interoperable, and compliance manageable. They are not necessarily the loudest in demos, but the most prone to adoption. They will not win on benchmark, but on integration. Adoption, not magic, is the true frontier.

It also matters for institutions and society. Regulators, procurement departments, IT, and professional bodies are not bystanders, even less obstacles, but the channels through which AI will diffuse. Their adaptations, liability frameworks, evaluation standards, compliance protocols, will determine tempo as much as technical progress.

Narratives shape these adaptations: the myth of imminent AGI pulls attention (and thus capital and talents) toward speculative endgames, while the normal technology frame encourages sobriety, focusing on adoption frictions.

And it matters for resilience. Risks are real, bias, misuse, systemic accidents, but they will manifest through adoption, not in abstraction. The challenge is not to eliminate risk before adoption, but to ensure institutions can absorb shocks as adoption unfolds. Blackouts did not halt electricity, worms did not end the internet, trial halts did not stop biotech. What mattered was whether societies built redundancy, diversity, and adaptive capacity into the systems they deployed. AI will be no different.

The lesson is simple, though its implications are demanding. The future of AI will not be decided by the brilliance of the next benchmark or the audacity of the next demo. It will be decided by when bottlenecks collapse, how institutions adapt, and whether resilience is built during the deployment phase. Progress will come not as rupture but as accumulation, not as miracle but as adoption.

For investors, policymakers, and societies alike, the task is clear: focus less on anticipating intelligence, and more on enabling integration. The real frontier is not capability, it is adoption.